It’s no secret that Amazon’s Alexa dominates the smart speaker market, but is she really the best choice? According to a new study, the answer is no, but I don’t know that I agree. They did a study for the second year in a row to see which smart speaker is well, the smartest, and while Google’s Assistant won, upon closer examination of extenuating factors, I’m still 100% team Alexa.

So, before you run and chuck your Echo device for Google, let’s look at the study and why I think it’s wrong.

Stone Temple Smart Speaker Quiz

For those of you who don’t know, for 2 years running Stone Temple has conducted a quiz, asking Google’s Assistant Amazon’s Alexa, Apple’s Siri, and Microsoft’s Cortana the same set of questions. Google’s Assistant was asked on a phone and on the Home speaker.

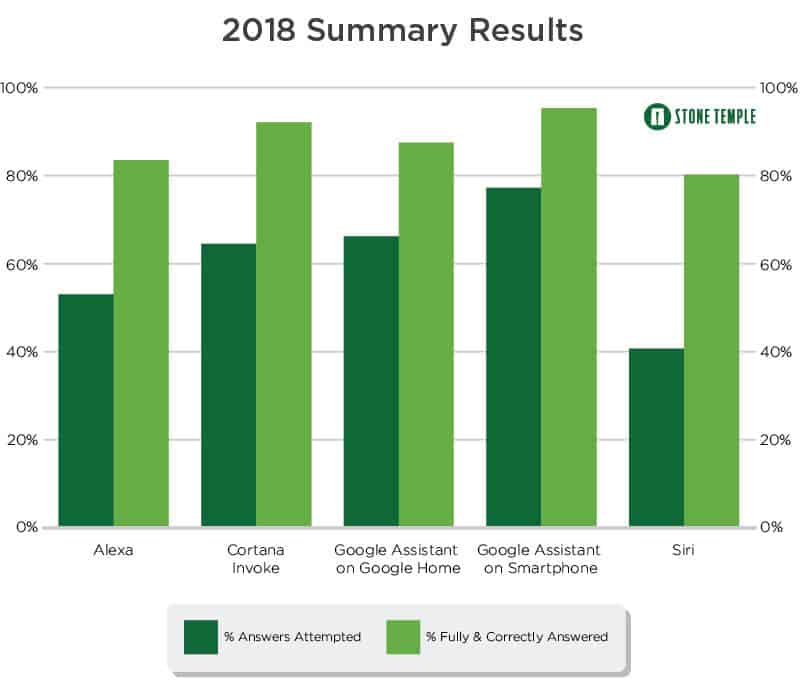

The results showed that Google’s Assistant not only answered the most questions, but it also attempted to answer more questions than the others as well. Cortana came in second, followed by Alexa and Siri.

Yes, that sounds bad, but not when you look a bit closer. To start, all the speakers had an over 80% correct answer rate; which means they’re all pretty smart and can answer a majority of questions. Which, if you own an Echo or Amazon Fire tablet, you already know. To me this alone makes the debate null and void; using Alexa to answer questions is just one of her many uses and for me 80% or better is more than enough for me. Plus, why wasn’t Alexa asked on a Fire tablet or phone like Google? Makes the results feel skewed.

Source from marketingcharts.com

In addition, it needs to be noted that a wrong answer isn’t necessarily wrong; as the study reports, a wrong answer many times means there may not have been enough information given, so the answer could have been right, just not complete. That skews the results, as it’s hard to know how many answers were really wrong. Alexa may have answered correctly, but just not as in-depth as Google, which in some instances may not matter when it comes to how you use and interact with her.

Source: https://www.go-gulf.com/blog/virtual-digital-assistants/

But to me, the biggest issue I have is that the number of questions attempted is not equal; Google’s Assistant answered more questions, so the fact that she got more correct answers seems kind of a given and doesn’t impress me. If Google and Alexa had attempted the same number of responses and Google got more right I would say Google has the edge. But the fact that Alexa tried to answer fewer questions and still had a high rate of correct responses. a rate pretty close to Google is way more impressive to me!

And when you factor in that Alexa is more compatible with more smart devices, well, the winner is clear to me. I’m not going to switch brands because Google’s Assistant has a 10% or so higher rate of correct answers. Don’t get me wrong, it’s a great accomplishment, just not great enough to get me, and I’ll bet a lot of other established Alexa users, to jump ship.